We're far from complete, but getting better:

The DB knows ns/mx-records for 12.829.155 domains. More is added daily until we're complete.

The DB knows currently 1.074.097 nameservers (counted by hostname, not ip!).

tom

Friday, June 22, 2007

Statistic-Figure A: 19.155.784

I'm not really into statistics and i don't do them regurlarly. But i'd like to remind myself that serversniff.net currently knows more than 19.155.784 unique domains with at least one resolving host. This should be around 15 percent of all known domains on the internet.

we're still adding around 100.000 new domainnames per day, focussing on domains outside of the .com/.net-space. To get a glimpse of domains added take a look at http://tomdns.net - there you can see the newest domains added in realtime.

tom

we're still adding around 100.000 new domainnames per day, focussing on domains outside of the .com/.net-space. To get a glimpse of domains added take a look at http://tomdns.net - there you can see the newest domains added in realtime.

tom

Spam from serversniff.net

Some asshole sent out a spam-wave with random Serversniff.net-Senderadresses. The little sucker put in a return-path and a sender with @serversniff.net. Since i have defined a "catch-all"-mailaccount for serversniff.net, i get all those nice complaints and returned mails. Hundreds of them! Argh.

And yes Sir, no M'am, neither my webserver nor my mailservers are hacked, take a look at the mailheaders:

Return-Path: <stasIsaev@serversniff.net>

Received: (qmail 25562 invoked by uid 0); 22 Jun 2007 12:17:06 +0300

Received: from 220.125.204.181 by post (envelope-from <stasIsaev@serversniff.net>, uid 92) with qmail-scanner-2.01

(clamdscan: 0.90/2659.

Clear:RC:0(220.125.204.181):.

Processed in 0.239443 secs); 22 Jun 2007 09:17:06 -0000

Received: from unknown (HELO ?220.125.204.181?) (220.125.204.181)

by post.ziniur.lt with SMTP; 22 Jun 2007 12:17:04 +0300

Received: from [220.125.204.181] (183.178.25.193)

by stasIsaev@serversniff.net with SMTP;

for <gvitkauskasd@ziniur.lt>; Fri, 22 Jun 2007 19:17:22 +0100

MIME-Version: 1.0

None of these sender-IPs belong to serversniff.net's infrastructure. Seems that it's time to drop the catch-all-adress for serversniff.net.

tom

And yes Sir, no M'am, neither my webserver nor my mailservers are hacked, take a look at the mailheaders:

Return-Path: <stasIsaev@serversniff.net>

Received: (qmail 25562 invoked by uid 0); 22 Jun 2007 12:17:06 +0300

Received: from 220.125.204.181 by post (envelope-from <stasIsaev@serversniff.net>, uid 92) with qmail-scanner-2.01

(clamdscan: 0.90/2659.

Clear:RC:0(220.125.204.181):.

Processed in 0.239443 secs); 22 Jun 2007 09:17:06 -0000

Received: from unknown (HELO ?220.125.204.181?) (220.125.204.181)

by post.ziniur.lt with SMTP; 22 Jun 2007 12:17:04 +0300

Received: from [220.125.204.181] (183.178.25.193)

by stasIsaev@serversniff.net with SMTP;

for <gvitkauskasd@ziniur.lt>; Fri, 22 Jun 2007 19:17:22 +0100

MIME-Version: 1.0

None of these sender-IPs belong to serversniff.net's infrastructure. Seems that it's time to drop the catch-all-adress for serversniff.net.

tom

Monday, June 18, 2007

For the records

For the records: Our update-lag with domainnames is at 443,017 days, and it's increasing. It will continue to increase for some time, for 450 days back was a time where we did bulk-updates: inserting many many new hosts from big lists at once, without to much handling of domains and ips at all. There wasn't too much data or trigger-overhead, and the database was not yet public. I hope to catch up the update-lag to 400 days in about a month and be around 200 days by the end of the 2007. I don't really believe than we can get smaller update-cycles with our current network-bandwidth. But you always have the option to filter outdated records from beeing displayed, regardless if you use serversniff.net, tomdns.net or serversniffs api.

tom

tom

Sunday, June 17, 2007

Serversniff deLux

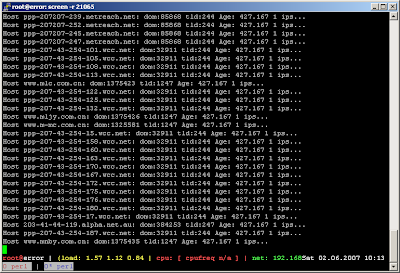

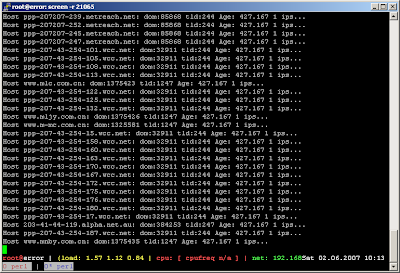

If you ever wondered what serversniff looks like:

Its located in the attic upstairs from the garage, where it's hot in the summer and cold in the winter. Its made of a cheaposystem with an Athlon 3Something with one Gig RAM and an old Perc2-sc-scsi-controller ripped from a Dell-server and a Proliant-HDD-array from ebay.

I suffered occasional blackouts when lightning stroke, doing damage to the database - so i ordered a brandnew UPS, the small thingy standing right, coming straight from china. We're prepared now.

tom

Its located in the attic upstairs from the garage, where it's hot in the summer and cold in the winter. Its made of a cheaposystem with an Athlon 3Something with one Gig RAM and an old Perc2-sc-scsi-controller ripped from a Dell-server and a Proliant-HDD-array from ebay.

I suffered occasional blackouts when lightning stroke, doing damage to the database - so i ordered a brandnew UPS, the small thingy standing right, coming straight from china. We're prepared now.

tom

Saturday, June 02, 2007

crazy ideas

around 500 days ago i had a crazy idea: mapping the net in a database. all domains, all hostnames, all relations of ns- and mx-servers.

i knew a few sites who should have this data but would not really let you look it up - whois.sc and netcraft.com were amongst them. that was all i knew. oh and yes, i knew mysql, i worked with sqlite and microsofts sql-server for years.

i expected this to be an adventure. a textadventure, fun.

and hence, it was fun. the database crashed, servers got blocked, i had errors in my harvesting scripts and i was overwhelmed when i got around 100 million domains with even more hosts at once.

and now i'm sitting on a bunch of data that gets older. my time is limited, my hardware-ressources are as well. data is getting old. i started updating the hostname/IP-entries these days. I should have done this earlyier, i know - but i didn't. time is limited - remember?

a bit frustrating: the "update-lag", the time between the last update of a hostentry is currently exactly at 427,167 days. most frustrating: it's still increasing.

hmpf.

hmpf.

tom

i knew a few sites who should have this data but would not really let you look it up - whois.sc and netcraft.com were amongst them. that was all i knew. oh and yes, i knew mysql, i worked with sqlite and microsofts sql-server for years.

i expected this to be an adventure. a textadventure, fun.

and hence, it was fun. the database crashed, servers got blocked, i had errors in my harvesting scripts and i was overwhelmed when i got around 100 million domains with even more hosts at once.

and now i'm sitting on a bunch of data that gets older. my time is limited, my hardware-ressources are as well. data is getting old. i started updating the hostname/IP-entries these days. I should have done this earlyier, i know - but i didn't. time is limited - remember?

a bit frustrating: the "update-lag", the time between the last update of a hostentry is currently exactly at 427,167 days. most frustrating: it's still increasing.

hmpf.

hmpf.tom

Subscribe to:

Posts (Atom)